Database optimization is an ongoing process, and that cannot be changed! It needs regular attention to simplify various processes and get the desired output without affecting long-term performance issues. But before we go any further, let us ask ourselves something, “Why do we need to optimize our databases? What is the end goal?” A simple answer is that every DBA is trying to optimize its database system and is trying to reduce its response time.

Database optimization is not a one-time setup. It needs consistent monitoring, updates, and upgrades combined with a set of basic implementations. This two combined help the DBAs achieve the best results and get quicker outputs.

Databases use specialized data structures to store information which makes it easy to extract the information whenever required. One of the most popular databases that are used by a majority of organizations and enterprises is the MySQL database. MySQL performance tuning has multiple aspects. As a decision-maker, you need to be well versed with each phase of the process and how you can delegate the tasks among your team to ensure maximum output.

So let us understand the different phases that can help you get that response time down and get more done in less time.

Step #1: Database Analysis

Before we get into the details of what needs to be done, it is essential to understand why it is required. And you can only solve a problem that has been identified and, more importantly, exists! To understand this, let us have a look at the database analysis life cycle.

To understand this better, we will look at all the steps a designer uses to design a database system. Each valid pointer is also an indication of how one should analyze their database system, its functioning, and any problems or issues it may face in the future.

1.1 Database Study

This usually involves all the factors that need to be considered when designing a database. These factors include the organizational operations and any plans for growth and expansion, any problems and constraints the company might face in the foreseeable future. It also includes the objectives of the system and how they align with the organizational aspirations. Finally, we need to define boundaries and limitations that might affect the DB system.

Just like designing a new database, these factors will also impact an already existing system. Over time, the requirements might change, the load and functions might change, which will lead to problems. But with a proper analysis of the situation and considering all possible scenarios beforehand, you can ensure that your database is ready to adapt to change in demands and requirements. This phase of analyzing the database performance will help you allocate resources and be prepared for the rainy days.

1.2 Database Design

While the database is designed to fulfill the conceptual, logical, and physical needs of the organization, any changes might need amendments. These design changes are looked into on a situational basis.

1.3 Implementation and Loading

Initially, data is loaded into the system when a database is set up. This data set includes information as per the requirement of the industry. Over time, this data might not be able to serve its purpose and become outdated. To avoid this situation, it is essential to check the information loaded into the system and ensure its validity.

1.4 Testing

You already know the importance of testing, and essential to identify any bugs and errors. But, when working on database analysis, consistently testing the database will help you identify patterns and recurring problems. Please keep a lookout for these as they can help you identify issues early on, leading to more significant issues in the future.

Step #2: Statistics Analysis

The optimizer is the heart of a relational DBMS. It forms a part of the SQL Tuning process that analyses SQL statements to determine the best access plan to satisfy each statement. This is done by analyzing each SQL statement to identify which columns and tables must be accessed. The optimizer then queries the system catalog and directory for statistics and system information to determine the best way to accomplish the requested tasks.

The system catalog contains statistics that the optimizer can use to help him optimize any item. These statistics give the optimizer information about the state tables that will be accessed in the SQL statement being optimized.

Complex calculations are performed by the optimizer based on much different information. The optimizer’s functionality can be simplified by visualizing it as a simple four-step process.

- Verify the syntax of the SQL statement.

- Analyze the environment to optimize the way you execute the SQL statement.

- To execute optimized SQL, create machine-readable instructions.

- Execute the instructions, or save them for future execution

Statistics for each table be it a small or a large table in the catalog are the most valuable resource for any SQL optimizer. Statistics are information about the distribution of indexes relative to each other. This information is used by the optimizer to determine the cheapest path that will satisfy a query. The optimizer will choose an approach that is less efficient if there are no statistics or outdated information. This will increase the response time.

Step #3: Index Optimization

The SQL optimizer is heavily dependent on the indexes that are defined for a table. Indexes can be a double-edged sword. One index won’t affect the performance of your SELECT queries, while too many indexes could slow down your DML (INSERT/UPDATE and DELETE). It is crucial to ensure that there is a balanced number of indexes on tables. It is essential to balance the number of indexes with the order and fields involved.

Composite Index

An index that contains more than one field is called a composite index. If you anticipate running queries with multiple fields in the WHERE clause, such indexes must be created. All fields combined will yield significantly fewer rows than the first.

Clustered Index

A table’s physical order is determined by a clustered index. The unique index fields determine the sort order, which means that the data are sorted according to their index. This is similar to a telephone directory, which arranges data according to the last name. Moreover, only one index exists for each table. These indexes can be handy for columns that are frequently searched for a wide range of values.

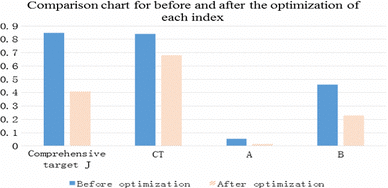

In a study by Research Gate, the following results were published for various indexes before and after optimization. The results show a huge gap between the two performances.

Source: ResearchGate

While most databases can support such an index, they use different terminology. Oracle calls it Index-Organized Table (IoT), while Sybase, MS SQL Database Server, and DB2 prefer using a clustered index. Index-related tuning includes the creation of a unique index for a column once a missing index has been identified.

Step #4: Data-type optimization

SQL Database Server can be thought of as a storage retrieval and management system. How data is stored can make a big difference in how quickly it can be retrieved. So, How is SQL Server data stored?

Our database records are kept on what we call pages. Each page is 8KB in size. There are many data types, and each one will take up different amounts of space on a page. You can determine the best data type to use for every kind of data by knowing how many attributes are contained in each record.

SQL Server will use fewer resources to read data that is dense on each page. Operational efficiency is what makes performance tuning possible.

Step #5: Query optimization

SQL query optimization refers to the process of creating thoughtful SQL queries in order to increase database performance. The amount of data that is accessed and tested during development is lower. Database Developers get quick responses to their queries. The problem begins when the project is live and vast amounts of data flood the database. These situations can slow down SQL queries’ response time and cause performance problems.

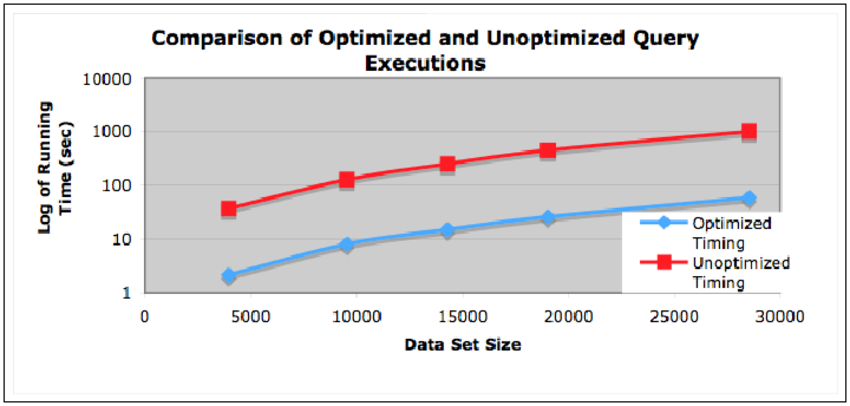

Even the slightest change can make a big difference in performance when working with large-scale data. Below is a performance comparison between an optimized and unoptimized query execution with multiple data sizes.

Source: Comparison – Optimization vs no-optimization

SQL performance tuning is a difficult task. Even minor changes like the way in which the outer query is modified can make a big difference in performance. Some of the best practices one should keep in mind when optimizing queries include:

- Indexing: Ensure that the database is appropriately index for easy access.

- Select query: To avoid additional fetching load on your database, the SELECT query should be used instead of SELECT*.

- Running queries: Query structure loops can slow down the sequence. Avoid them.

- Matching records: If the record is not already in existence, you can use EXITS() to match it.

- Subqueries: Avoid correlated queries. It searches row-by-row, which can slow down the processing of SQL queries.

- Query Plans: Query plans give in-depth insight into what the database is doing while running a query. Examining a slow-running “Select Statement” can potentially reveal issues and you can tackle them directly.

Conclusion

As we have now seen that Database optimization techniques have many sub-processes, and it is essential that you understand and acknowledge each phase of the process. This will not only help you break down and simplify the process of monitoring and optimizing your system but will also help you keep track of all the changes that are being made to the system.

As a key decision-maker, it is of utmost importance that you make the right call when it comes to your MySQL performance tuning process. It will establish a solid baseline for your system metrics and will help you track your company’s progress. This comparison will prove to be very useful in the future, and you will thank yourself for having taken the necessary steps.

So, if you are unsure whether you are taking all the necessary steps to get the most out of your database systems, it is time you consult with the experts. We, at OptimizDBA, will analyze your strategies after getting a thorough understanding of your requirements and ensure that you choose the most viable options for a better future for your enterprise. Think no more and get in touch with one of our experts today.